Web Scraping with Web Scraper

DATA 351: Data Management with SQL

April 8, 2026

Web Scraping in DATA 351

Why this tool?

You already know SQL is for data you control. Much of the world’s data still lives in HTML pages. For structured extraction at human scale (assignments, prototypes, one-off exports), a point-and-click scraper inside the browser keeps you close to the page and avoids writing a full crawler on day one.

This lecture uses Web Scraper (Chromium extension from the Chrome Web Store). It is not the only option, but it matches our goals: sitemap, selector tree, preview, CSV export.

What you should be able to do after today

By the end of class, you will be able to:

- Install the extension and open it from Developer Tools

- Create a sitemap with one or more start URLs, including numeric ranges for numbered pages

- Build a selector tree (for example, Link selectors with child Text selectors)

- Run a scrape, tune request interval and page load delay, then browse and export CSV

- Sketch a multi-page Discogs search workflow that follows links to release pages and pulls detail-page statistics, and name the ethical constraints (terms of service, robots, politeness)

Official documentation (read alongside the slides)

The steps and screenshots in this deck follow the official guide:

- Scraping a site (start URL, ranges, selectors, scrape, export) 1

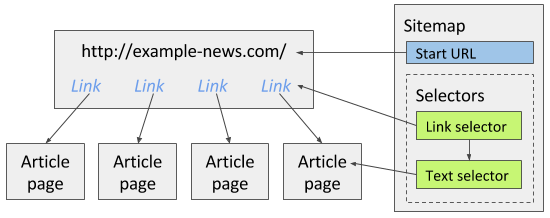

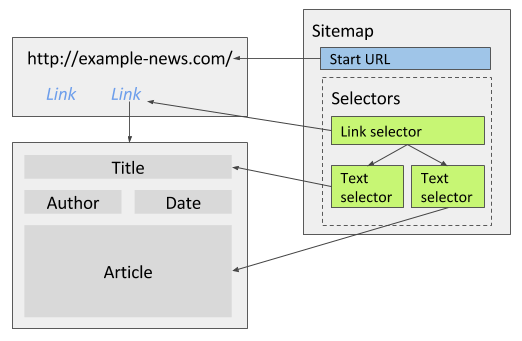

Keep that page open in a tab while you work. Figures such as the news site example (selector tree with a Link selector and a child Text selector) are shown there in full.

Part 1: Install and open

Install the extension

Install Web Scraper from the Chrome Web Store (works in Chromium-based browsers that allow extensions):

After installation, restart the browser or only use the tool in tabs opened after installation so the extension loads cleanly. Requirements are listed under Installation 2.

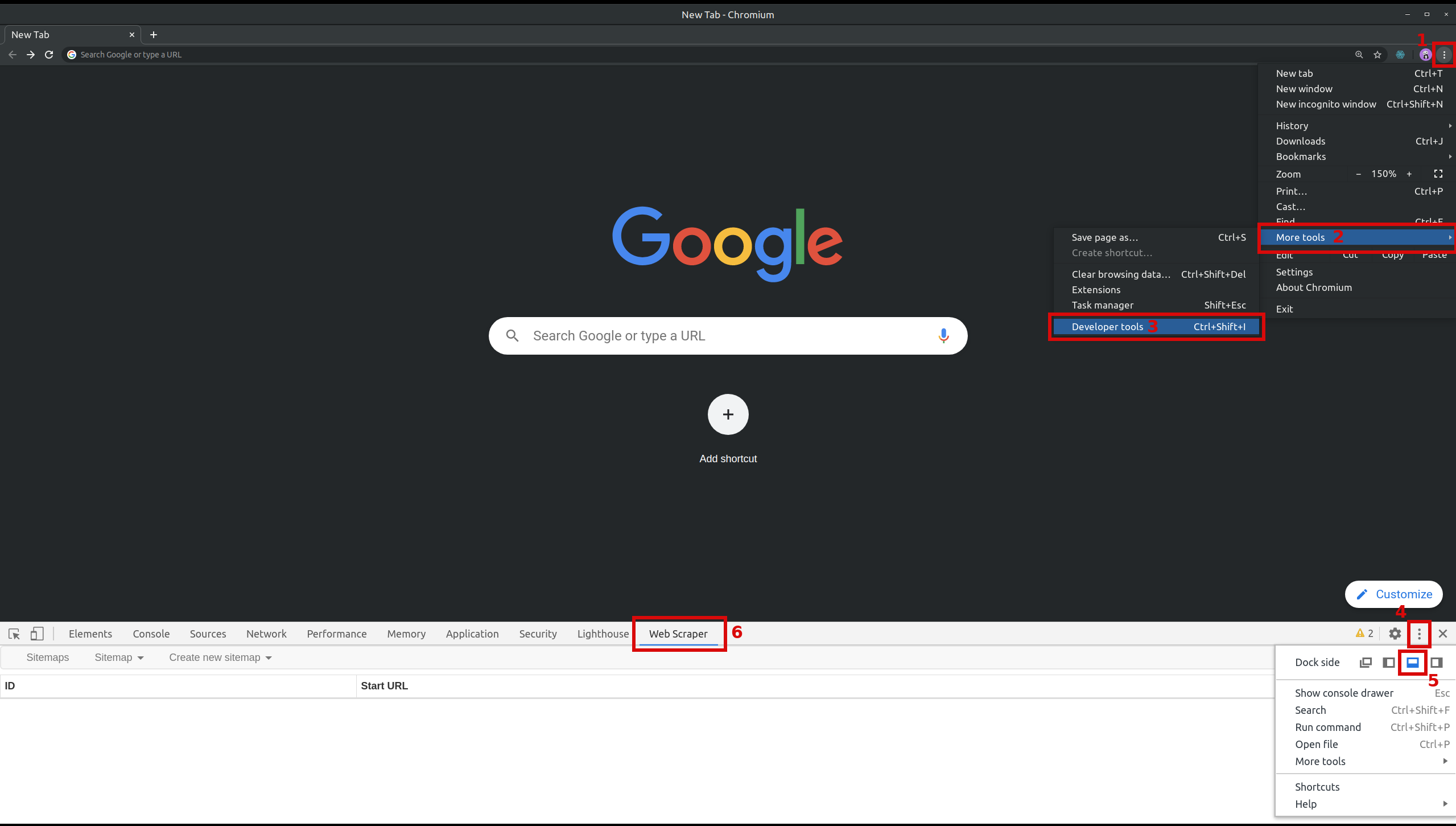

Open Developer Tools and the Web Scraper tab

Web Scraper lives inside Developer Tools, not only as a toolbar icon.

Shortcuts:

| Platform | Open Developer Tools |

|---|---|

| Windows / Linux | Ctrl+Shift+I or F12 |

| macOS | Cmd+Opt+I |

Then open the Web Scraper tab inside the tools panel. Open Web Scraper includes a figure for Chrome 3.

Web Scraper tab in Chrome Developer Tools (documentation figure).

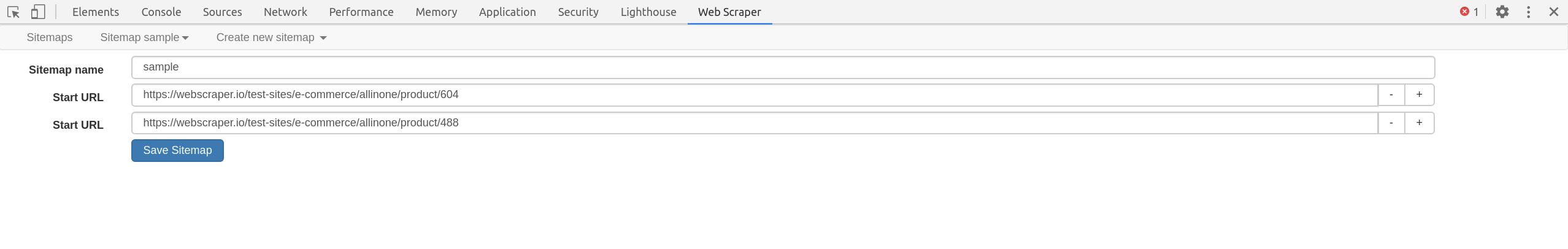

Part 2: Create a sitemap

Start URL

A sitemap is your recipe for one scrape job. The first setting is the start URL: the page where crawling begins. You can add multiple start URLs (for example, several search queries) using the + control next to the URL field. After creation, start URLs also appear under Edit metadata in the sitemap menu 1.

Sitemap editor: start URL field and + to add more start URLs (documentation figure).

Numeric ranges in the start URL

When page URLs contain a number, you can replace that segment with a range instead of listing every page by hand 1:

| Pattern | Example meaning |

|---|---|

[1-100] |

Pages 1 through 100 |

[001-100] |

Zero-padded, e.g. 001, 002, … |

[0-100:10] |

Step by 10: 0, 10, 20, … |

Examples from the docs:

https://example.com/page/[1-3]yields/page/1,/page/2,/page/3https://example.com/page/[001-100]matches three-digit pathshttps://example.com/page/[0-100:10]yields every tenth value

Part 3: Create selectors

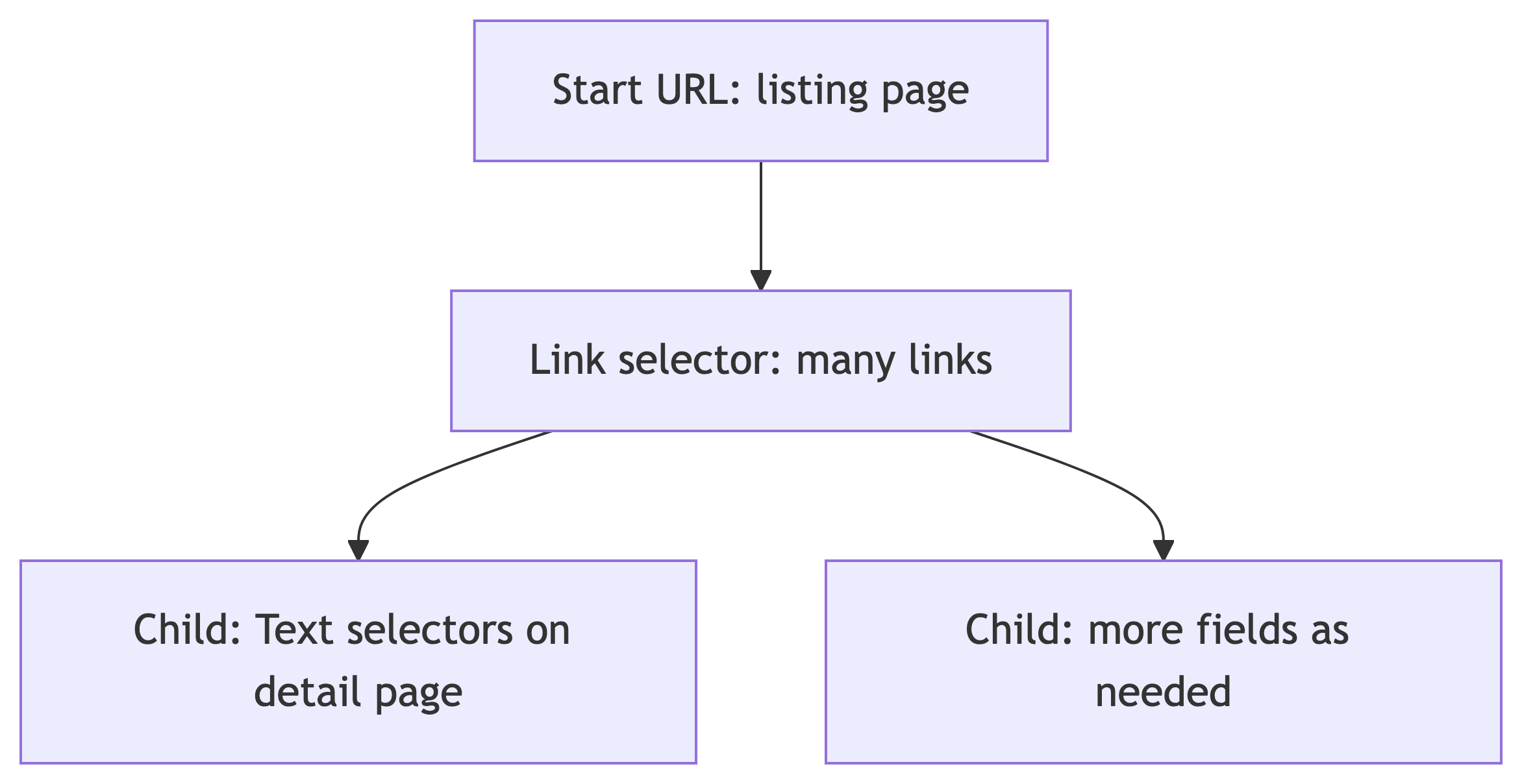

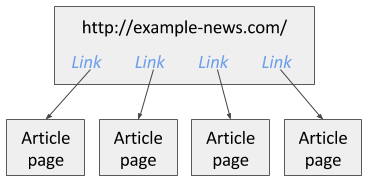

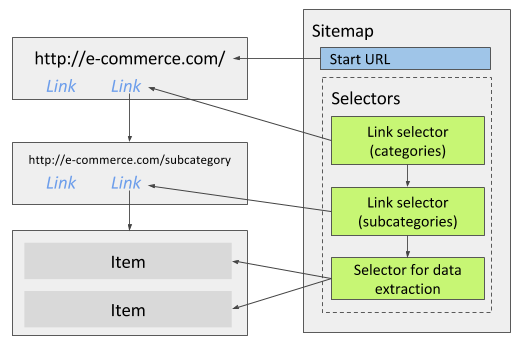

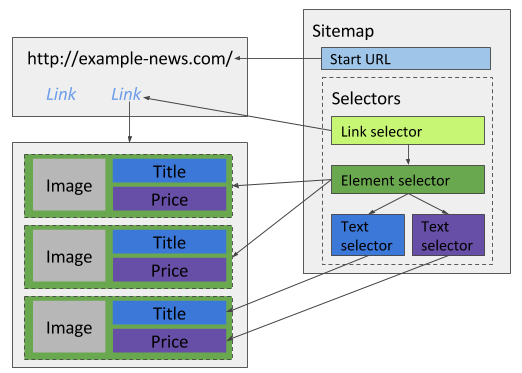

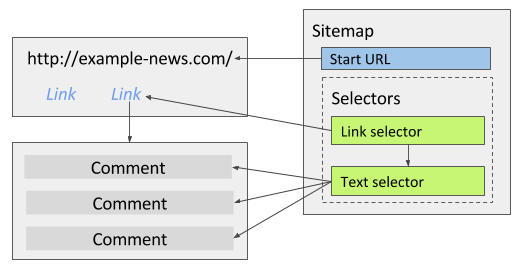

Selector tree and order

Selectors are organized in a tree. The extension runs them in tree order: parent selectors run first, then children on the pages those parents open 1.

Classic pattern from the documentation:

- Link selector on a listing page: collect many links (for example, every article link)

- Child Text selector on each article page: pull the fields you need from that page

Use Element preview and Data preview when you build each selector so you know the CSS selection matches real nodes.

Core selector types to read next

The docs recommend being comfortable with at least Link selector and Text selector 4, 5. Link selectors follow href values and pass child selectors to the destination page. If clicking a result does not change the URL (heavy AJAX), read Pagination selector instead of forcing a Link selector 4.

Part 4: Run the scrape and export

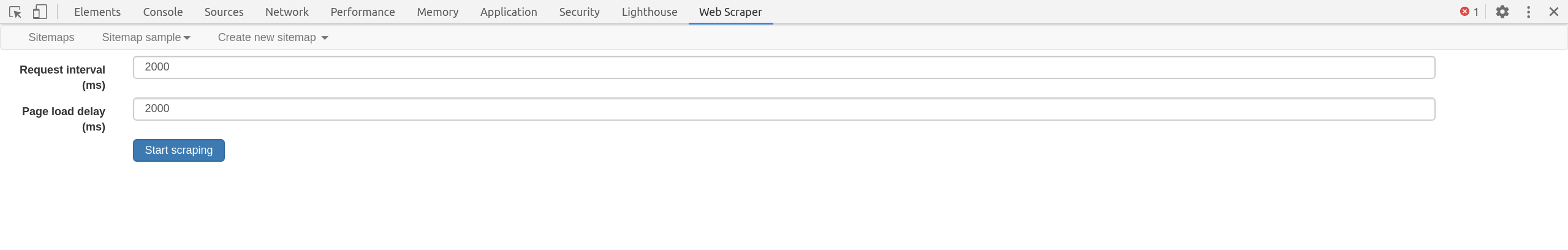

Scrape panel

When the sitemap is ready, open the Scrape panel and start the job. A popup window loads pages and extracts rows. When it finishes, the popup closes and you get a completion notice 1.

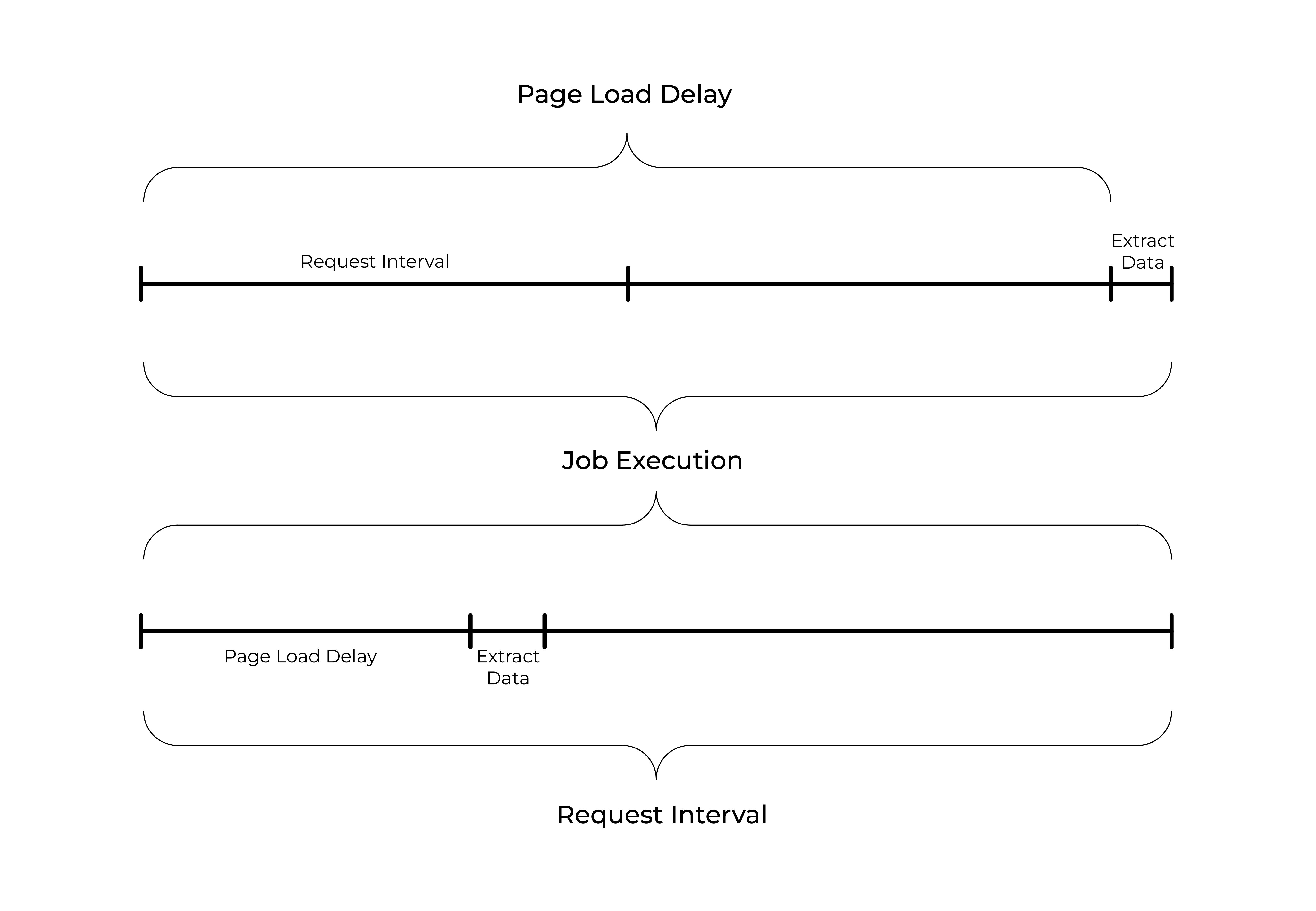

Two knobs matter for fragile sites:

- Request interval: minimum time between HTTP requests (be polite; reduces load and bans)

- Page load delay: wait after load before running selectors (helps with late-rendered content)

Browse and CSV

After scraping:

- Browse panel: inspect extracted rows in the tool

- Export data as CSV: download for spreadsheets or later loading into SQL

Part 5: Demo

Goal and URL

Goal: build one table where each row is a release you care about. Rows should combine:

- Listing fields from the search results (for example title and artist line as shown on the card), and

- Statistics from the release detail page after you follow the link (for example community Have and Want counts, average rating, and number of ratings, or other stats visible in the header or sidebar)

Discogs lays these out in HTML that can change; use Element preview and Data preview on a real release page to lock selectors.

Start from at least five search result pages for releases, sorted by community have count (descending):

https://www.discogs.com/search?sort=have%2Cdesc&type=release

Pagination: Discogs search uses a page query parameter. For five pages, a range start URL matches the documentation pattern 1:

If your browser copies the URL with a leading slash path variant, keep the query string identical aside from page=[1-5]. You can instead add five separate start URLs (page=1 … page=5) using the + URL field.

Ethics and housekeeping

Discogs is a real commercial community. For class:

- Stay within Discogs Developer Terms and general Terms of Use; do not republish bulk data or circumvent access controls 6, 7

- Prefer moderate request interval and delay; this is a teaching demo, not production mirroring

- Site HTML changes; if selectors break, update CSS with Element preview

Suggested sitemap (recommended: listing plus detail statistics)

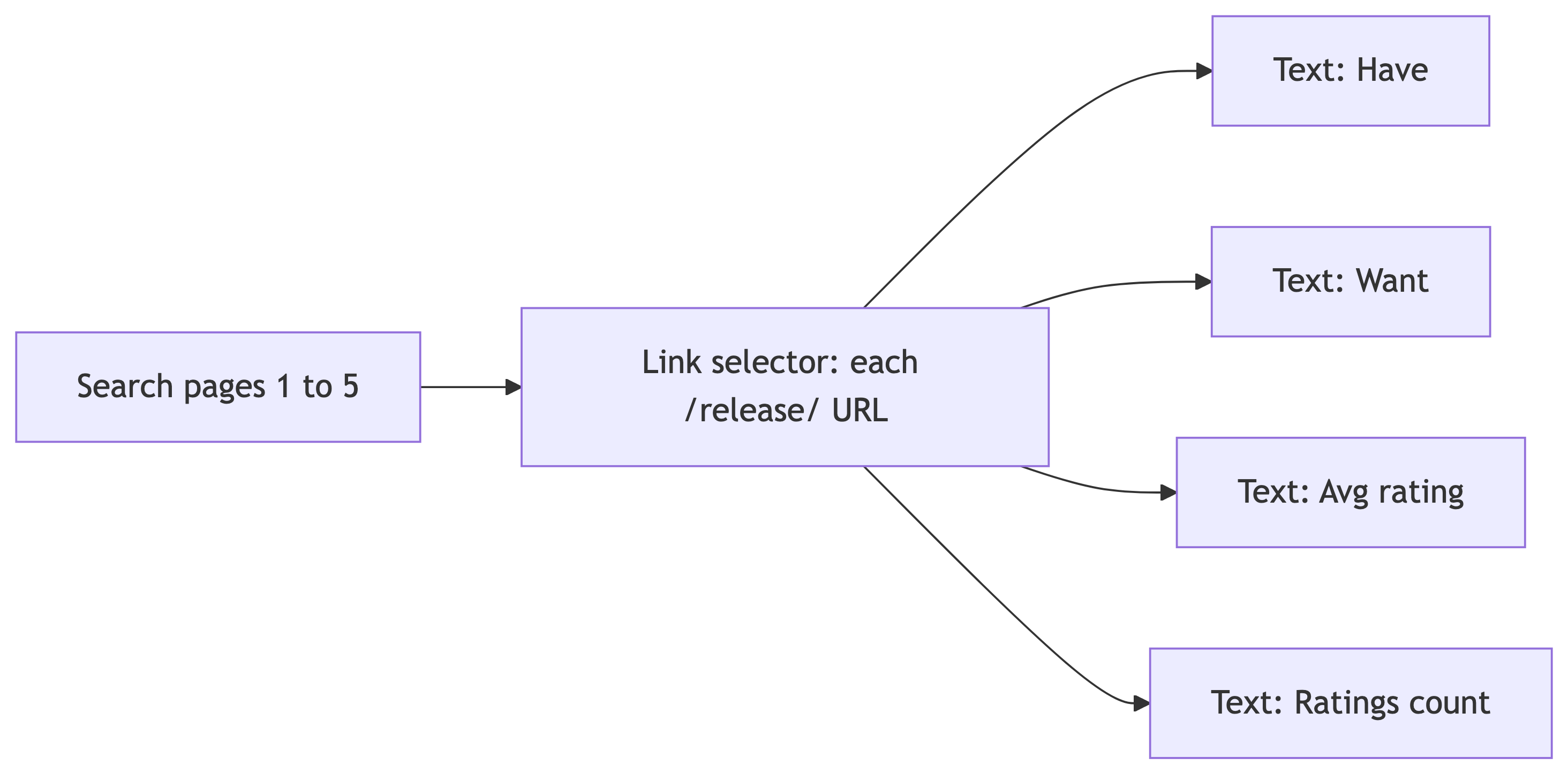

Primary path for class (search, then each release page):

- Start URL with

page=[1-5]as above (or five explicitpage=URLs). - Link selector (multiple enabled) whose CSS matches the anchor for each search result that points to a

/release/URL. This opens the detail page for each album. Name it clearly (for examplerelease_link). - As children of that Link selector, add Text selectors that run on the release page, not on the search grid. Aim for at least:

- One statistic for Have (community copies owned)

- One for Want (community want list)

- Average rating and rating count, if shown, or substitute other numeric fields you see in the page chrome (for example Last sold or Lowest price) if the layout differs that day

- Optional sibling Text selectors under the same Link (still on the detail page) for title or catalog number if you want columns that only appear on the detail view.

Optional warm-up (listing only): before you add the Link selector, you can add Text selectors on the search page scoped to each card to capture title and artist from the snippet. Those columns are redundant with the detail page for some fields, but they help verify selectors before you crawl deeper.

Tips:

- Detail URLs look like

https://www.discogs.com/release/.... Avoid confusing them with master or artist links if multiple anchors appear in the same card; restrict the Link selector with a CSS filter or parent scope so you only enqueue releases. - If a statistic is split across elements (label plus number), try a parent Element on the stat block, then a Text child, or one Text with a tighter selector. Re-check after Data preview.

- If result links behave like in-page loading without a stable URL, switch to Pagination or adjust link type per Link selector 4.

Live demo checklist

- Open the search URL in a fresh tab; confirm you see results

- Open a single release in another tab; note where Have, Want, and rating numbers live in the DOM for your Text selectors

- Open Developer Tools, then the Web Scraper tab

- Create sitemap, paste the start URL (range or five URLs)

- Add the Link selector to

/release/pages; then add Text children for detail statistics (and optional listing fields). Verify with Element preview / Data preview on both search and release views - Scrape with conservative timing; watch the popup until completion (detail pages mean more requests than listing-only)

- Browse rows: confirm one row per release and non-empty stat columns

- Export CSV

References

Sources

- Web Scraper Documentation. Scraping a site. https://webscraper.io/documentation/scraping-a-site

- Web Scraper Documentation. Installation. https://webscraper.io/documentation/installation

- Web Scraper Documentation. Open Web Scraper. https://webscraper.io/documentation/open-web-scraper

- Web Scraper Documentation. Link selector. https://webscraper.io/documentation/selectors/link-selector

- Web Scraper Documentation. Text selector. https://webscraper.io/documentation/selectors/text-selector

- Discogs. Developer Terms of Service. https://www.discogs.com/developers/terms

- Discogs. Terms of Use. https://www.discogs.com/help/doc/terms-of-use

Figures from the Web Scraper documentation

Embedded screenshots are the same figures used on Scraping a site 1, Open Web Scraper 3, Link selector 4, and Text selector 5. Copies are stored under data351/assets/images/webscraper-docs/ for this deck.

Video tutorials (from the docs)